What a robots.txt File Is and Why It Matters

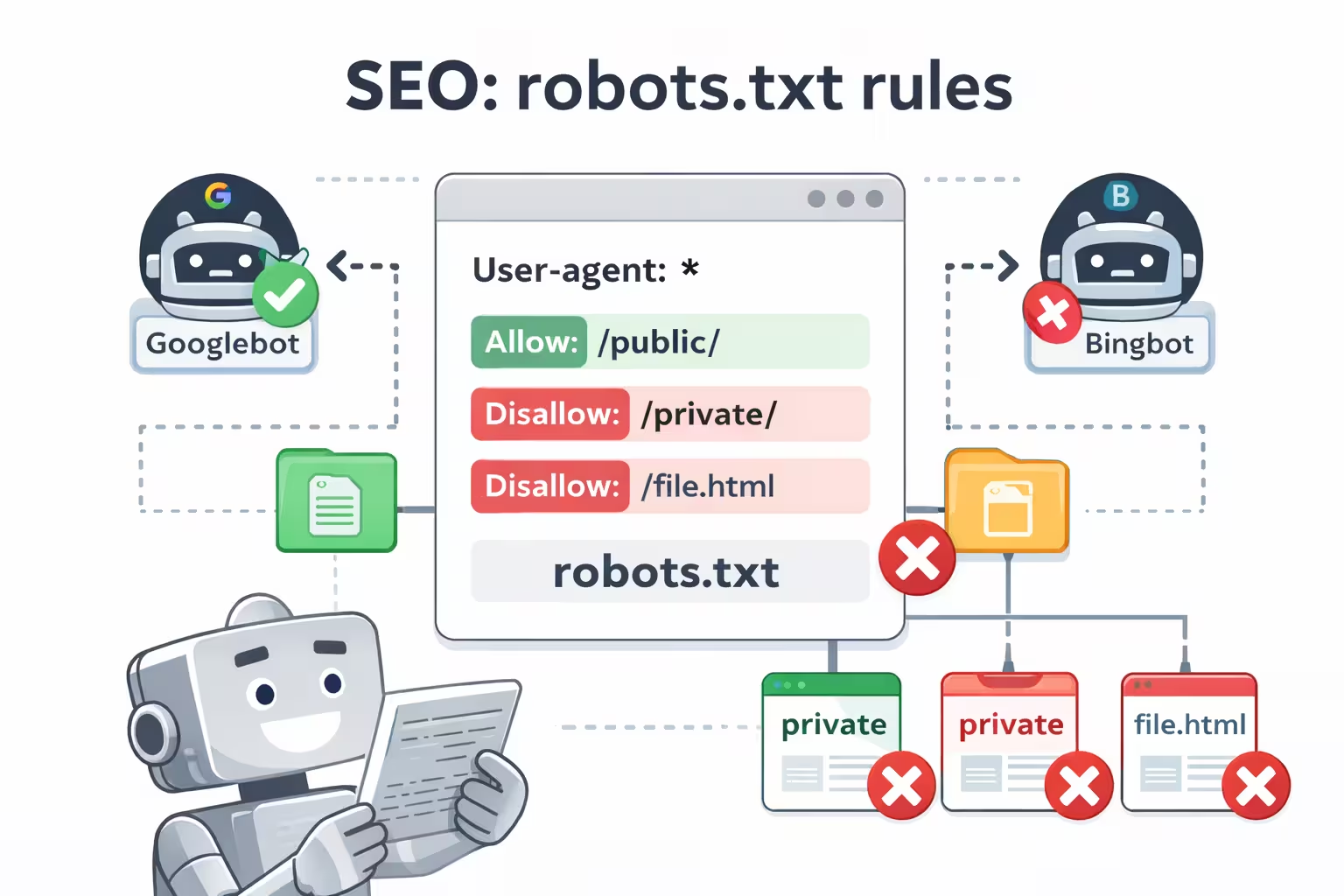

A robots.txt file is a small but powerful tool that tells search engines how to crawl your website. It contains instructions, known as directives, that indicate which pages or sections of your site crawlers are allowed to access and which they should avoid.

If no robots.txt file exists, search engines will attempt to crawl the entire website. While most major search engines respect robots.txt directives, they are considered guidelines rather than strict rules, meaning crawlers may sometimes ignore them.

Why robots.txt Is Important for Your Site

From an SEO perspective, robots.txt plays an essential role in guiding how search engines interact with your site. A properly configured robots.txt file helps you:

- Control indexing by preventing duplicate or unnecessary pages from being crawled

- Protect sensitive areas such as login pages, test environments, or internal tools

- Optimize server resources by reducing unnecessary bot traffic

Important: Incorrect changes to robots.txt can make large portions of your website inaccessible to search engines, which may negatively impact visibility and traffic.

Common robots.txt Challenges

Many websites encounter issues with robots.txt configuration. Most problems fall into three main categories:

- Misuse of wildcards that unintentionally block important sections of the site

- Unexpected changes made during development or site updates without proper review

- Non-standard directives that are not recognized by search engines and provide no real effect

These issues often occur because robots.txt is edited manually or buried inside complex SEO plugin settings.

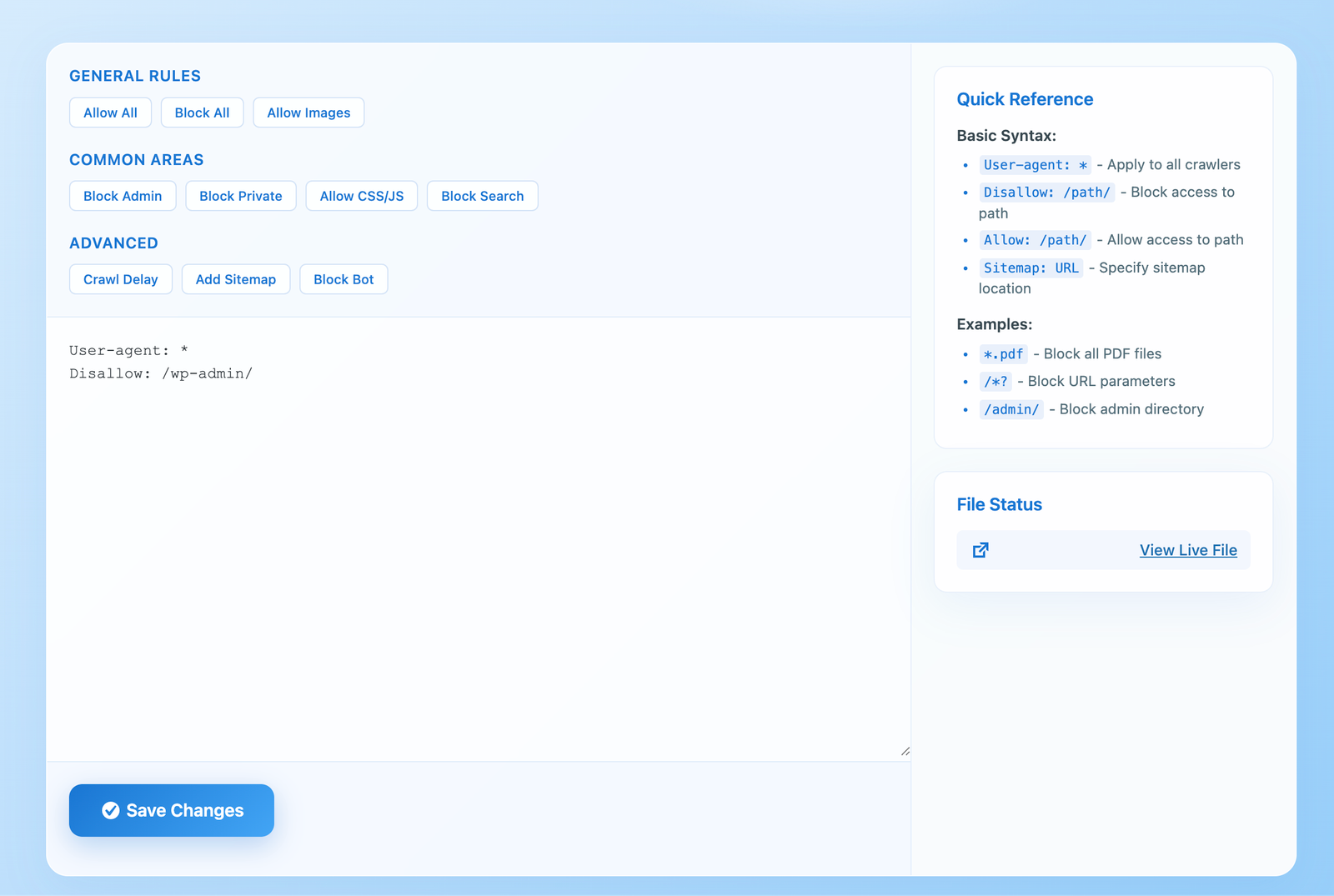

WPZone Robots is designed to make robots.txt management straightforward and predictable for WordPress users. It provides a focused, standalone solution without relying on large SEO plugin suites.

- Intuitive editor directly inside the WordPress dashboard

- Independent from SEO plugins such as Yoast or Rank Math

- Full manual control over crawler access rules

- Lightweight and focused, with no unnecessary features or SEO bloat

WPZone Robots allows non-technical users to manage crawler access safely, while giving developers and site owners predictable control over their robots.txt file.

Best Practices for Your robots.txt File

When creating or editing your robots.txt file, keep the following best practices in mind:

- Place the file in the root of your domain (e.g. https://www.example.com/robots.txt)

- Avoid blocking important internal pages that support navigation and internal linking

- Limit the use of directives like

crawl-delay, which may be ignored or misinterpreted - Regularly review your robots.txt file to detect accidental changes or conflicts

Tip: Robots.txt should only be used for content that search engines should never crawl, such as login areas, test pages, or auto-generated URLs.

Stay Updated with Wpzone

Get the latest news!

Subscribe to our newsletter and be the first to know about new features, updates, and exclusive offers for our taxi booking app.

We respect your privacy. Unsubscribe at any time.

Cons of Not Using robots.txt

What happens when crawler access is left unmanaged

Exposure of non-public areas

Login pages, test sections, or internal URLs may be discovered and crawled unnecessarily.

Limited control over indexing

Robots.txt controls crawling, not indexing, and search engines may still index blocked URLs.

Wasted resources and crawl budget

Excessive crawling can consume server resources and reduce indexing efficiency for important content.

Pros of Using robots.txt

Why robots.txt is a valuable tool for website management

Improved crawl efficiency

Helps search engines focus on important pages by avoiding unnecessary or low-value URLs.

Protection of sensitive areas

Prevents crawlers from accessing login pages, test environments, or internal tools.

Reduced server load

Limits excessive bot activity, helping preserve server resources and improve performance.

Conclusion

The robots.txt file is a powerful but delicate SEO tool. When used correctly, it improves crawl efficiency, protects sensitive content, and reduces unnecessary server load.

WPZone Robots makes robots.txt management simple, safe, and transparent on WordPress, giving you full control without adding SEO complexity or hidden dependencies.

Start using WPZone Robots today and ensure search engines crawl only what truly matters.

Key Takeaways

- First important takeaway

- Second key point

- Third essential insight

WPZone Robots

Easily manage and customize your WordPress robots.txt. Simple control for search engine crawlers.